Mentor's Emulation Vision. New Solutions for New Challenges

August 27, 2018

Blog

What has changed ? and what will change more dramatically going forward ? are the specific use cases and bottlenecks that emulation will solve.

This is part one of a series. Read part two here.

The exciting thing about participating in an industry over the decades is watching change happen right before your eyes. Back when I was building and marketing computer systems, it was a different world. The scale was different, the technology was quaint by today’s standards and somehow the environment felt more relaxed as compared to today’s pressure cooker.

Yet, while some things change, others stay the same. I was solving the same fundamental problem back then as today: ensuring that a system is truly performing in the way the architects intended. As a user of verification tools, and, later, at Meta Systems, as an emulation provider, we were solving different specific issues – largely, what is referred to as in-circuit emulation (or ICE) today. However, that is because it was the biggest challenge of its day.

Today at Mentor, a Siemens business, we are still solving the basic problem of making sure that systems perform correctly. These days, however, those systems reside on a single piece of silicon, meaning that “rework” indicates expensive mask respins or major system revision. That has boosted the urgency of thorough verification within a “reasonable” timeframe.

What has changed – and what will change more dramatically going forward – are the specific use cases and bottlenecks that emulation will solve. So, as we advance swiftly into 2018, the Mentor team and I are anticipating the future problems we need to address. These are the things that keep managers and directors tossing and turning at night, hoping that, when it comes time for their teams to prove that their silicon or system works properly, all the verification pieces will be in place.

Emulation is taking an increasingly central role as a solution largely because of its speed and versatility in running large suites of tests quickly. But exactly which problems we solve depends on what industry you look at. We can use six industries as examples, each of which has its own challenges. Yet, if we look closer, some common themes emerge, giving us a roadmap that brings broad benefits across the industry.

Networking: This space has always been about processing traffic as quickly as possible. With software-defined networks (SDN), there’s more focus than ever on the hardware/software divide. The hardware circuits and computing platforms mean complex, high-performance ASICs, tightly coupled and co-packaged with memories, using the most aggressive possible technology. Combine that with new protocols and wireless PHYs – especially for the Internet of Things (IoT) and the relentless search for better security – and there’s plenty to challenge us here.

Storage: Solid state storage is inexorably replacing rotating media as the preferred way to store persistent data. That puts pressure on 3D NAND flash to increase its capacity, and it means new storage form factors and access protocols. Closer to the processor, both Hybrid Memory Cube (HMC) and High-Bandwidth Memory (HBM) involve stacks of memory dice. And a growing concern over protecting data adds security considerations to this space.

Mobile and multimedia: While these might seem distinct, one of the persistent challenges of the mobile market is pumping ever more data through it as a result of increased access to multimedia content. 5G cellular technology is the next step in that direction. The solutions demand processors were built on the latest silicon process node, possibly co-packaged with memory. They demand hardware-accelerated graphics processing even as new, higher-resolution standards for drones, virtual reality, and 8K UHD video emerge. Applications must be verified as a whole, uniting software and hardware contributions in a single platform. And yet, despite all the computing muscle, energy consumption must be minimized for battery-powered devices.

Processors: Individual processors for servers need blazing technology, period. Systems-on-chip (SoCs) mean integrating one or more CPUs with memory and application-specific functionality on a single die. Each of those pieces, including the processor, can be optimized for speed, power or area. In most cases, the latest silicon technology is required. Then there’s the question of what, if any, functions should be offloaded and accelerated into hardware. And, increasingly, a secure processing environment is required, which may involve a combination of virtualization technologies (hardware and software) as well as ways to establish roots of trust within the processor.

Automotive: Imagine all of the previous industries combined and working seamlessly at 65 mph. That’s the vehicle of the future, with or without a driver. While computing power may not be the order of the day for the driving mechanisms, the infotainment center will need horsepower for processing multimedia. This is likely to involve co-packaging of CPUs and memories and graphics units. Meanwhile, the control side demands increasing numbers of sensors and actuators, which themselves continue to evolve rapidly. Electronic control units (ECUs) form an intermediate hierarchical level between low-level IP and the full system. New communication protocols like IEEE 802.11p must be selected and refined, and – as has been well demonstrated – all of this technology must operate securely.

Internet of Things: Like automotive, the IoT requires all of the previous elements, although with different challenges and tradeoffs. Highest on the list are new communications protocols, security and technology for sensing and actuating – all tuned either for battery-powered, resource-constrained edge devices, or for resource-rich cloud computing devices, or for hubs and routers that lie between the two extremes.

The emergent themes that we find in common with these application challenges are:

- Multi-die packaging, whether for memory or high-performance processing

- New standards and protocols for communications and memories

- Balancing hardware and software functionality at the system level

- Aggressive silicon process technology for high-performance processors, ASICs, flash and SoCs

- Security in the quickly moving automotive market

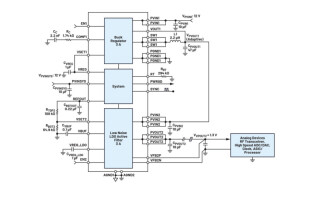

- Analog for sensing and actuating and for new wireless transmitters and receivers

These are what keep me up at night – except that we are already working to address them, making my nights less troubled. Over the coming year, you’ll see more in this space from many of our experts writing more about how each of these areas might impact verification and what emulation’s role needs to be.

This is our vision for emulation. Much of it is a work in progress, but it always benefits from a greater understanding of the challenges our customers face. So hopefully these discussions will do more than help you sleep; hopefully they’ll inspire an ongoing conversation that lets us better match our solutions to your challenges.

Eric Selosse, Vice President and General Manager, Mentor, a Siemens Business

Eric Selosse, VP and GM of the Mentor Emulation division, joined Mentor in November 2000. Eric has extensive engineering and marketing experience. Prior to joining Mentor, he was in charge of Bull Open Systems Division. He has also held a variety of engineering and marketing management positions at IBM Networking Division, both in the U.S. and France. He holds an engineering degree in electronics.

Mentor, A Siemens Business