The Internet of Learning Things

August 10, 2018

Blog

When did artificial intelligence become popular again?

When did artificial intelligence become popular again? It can be dated back to March 2016, when AlphaGo, the neural network-powered artificial intelligence (AI) by Google beat the Korean champion Lee Sedol at the game of go, which had the reputation to be so complex that no machine could ever solve its puzzle.

We are now in full “hype mode”, with even the popular press embracing the hopes and fears generated around intelligent machines. Very soon, your barber will talk to you about AI, and that will be the end of the cycle. This reminds me of another hype that we are all familiar with: The Internet of Things or IoT. As a matter of fact, when you plot the two popularity curves, as shown in Figure 1, for the search terms IoT and Artificial Intelligence on Google Trends, they follow a very similar pattern.

[Figure 1 | Google Trends, IoT (red) and Artificial Intelligence (blue)]

While several IoT startups have emerged and died, the IoT is still here. It is now incubating in the slow growth mode that precedes the final blossom of a new technology. And this makes me think about another likely and no so distant event: The collision between IoT and AI.

Things that think

Most of the AI applications focused initially on Internet services from Facebook, Google, Amazon and the like, with algorithms running on servers equipped with multicore CPUs and GPUs operating at GHz frequencies with terabytes of memory. Then AI reached the high-performance consumer devices, appearing in smartphones, semi-autonomous cars, gaming consoles, TV and smart speakers. While technically “at the edge” and often classified as “Internet of Things”, these devices are peculiar in the IoT species by the fact that they still require lots of power, performance and memory, and are connected to the Internet with high-bandwidth links.

Most of the billion-plus devices foreseen by IoT luminaries are much more constrained. Bringing AI into these devices at the very edge of the network would have a transformative impact but would require striking the right balance between resources, connectivity, cost and power.

Some may argue: With such small devices, what can be the utility of an AI at the edge? To picture it, let’s compare these devices with what can be achieved with animal brains.

Wikipedia gives an overview of the average neurons for several animals, including the number of synapses (neuron connections) for a few of them, and we can extrapolate synapses/neurons for the rest. If we try to emulate an animal brain’s processing power with a deep neural network, as shown in Table 1, a rough approximation could be to consider that we need one byte for each neuron (output) and one byte for each synapse (weight).

[Table 1 | Summary of the memory needed to emulate animal brains]

Ant power!

Microcontrollers (MCUs) have a memory range of 1-megabyte to 4-megabytes, so AI in a MCU should be as useful as a jellyfish or a snail. Not much intelligence would you say?

Well, let’s look at it twice: Snail and jellyfish can feed themselves, move, reproduce and hide when they feel threatened. They manage complex interactions with the environment, recognize patterns and control their body to create motion. This level of intelligence is enough for simple devices, like thermostats, vacuum cleaners, and doorbells. The idea here is to have devices that make micro-decisions based on the sensing of their environment.

A device with a snail brain could at least recognize some micro-patterns, the same way a snail identifies what to eat and what to fear. Imagine a door lock identifying an attempt to tamper with it, or a washing machine defining its program by the color of your clothes.

Animals, even ones with lower intelligence, are incredibly skilled at one thing that seems to evade computers: Interaction. Animals interact instinctively with each other, through sight, smell and touch. A snail brain in a device could certainly bring a sensitive user interface, more intuitive and more natural.

With microprocessors that have external memory in the gigabyte range, the level of an ant or a honeybee can be reached. The typical use-case for insect-level intelligence is the swarm. The idea is that with a myriad of simple robots with enough intelligence to exhibit swarm behavior, complex tasks can be performed, like what an ant colony can do. If you are wondering about the utility of the insect brain, knowing that there are 10 quintillion insects in the world and only 7.6 billion humans, the answer is it can be very useful.

The applications are vast, in farming, smart cities, environment, security, rescue and defense. Researchers at Harvard demonstrated a 1024-robot swarm, which is the largest to date. Like ants or honeybees, the 1024-robot swarm can achieve remarkable colony-level feats like transporting large objects or autonomously building human-scale structures.

Enabling endpoint intelligence

In feature-rich embedded devices, from smartphones to security cameras and cars, machine vision has been driving AI adoption. With concrete use cases, a large potential market and an efficient algorithm, CNN (convolutional neural networks) machine vision allows for hardware acceleration. Now almost every device capable of image acquisition and processing integrates a CNN AI accelerator.

Enabling AI in smaller IoT devices at the endpoint of the network is not that simple, as there are multiple unproven use cases. It is difficult to define a proper hardware acceleration, therefore, the route that many hardware companies are taking is to enable AI with tools and software on general-purpose controllers, and to monitor the evolution.

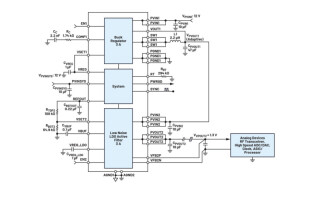

To this purpose, Renesas Electronics was one of the first companies to release an embedded AI solution (e-AI) that enables users to translate a trained network from Tensorflow or Caffe to code that is usable by its MCUs. The tools offered include an e-AI translator, that converts the neural network in C code usable by the MCU tools, and an e-AI checker that predicts the performance of the translated network. Renesas has identified several use cases, in predictive maintenance for instance, but the intention is to let users and the communities discover and innovate. This is why the tutorial is available for the Renesas community “gadget Renesas” boards and why Renesas Electronics America has been promoting an e-AI design contest on the GR-PEACH board, using the RZ/A1H embedded MPU.

Billions and counting

With embedded AI, we are in a typical “business model definition” stage, when the solution has been invented and is used by the early adopters, but there is a gap before it achieves mainstream acceptance. Startups and innovative companies engaging in this research will have to use all their wits to cross the chasm. With new developments every day, it is certain that many pivots will be necessary before they find a profitable use-case and a growth engine to achieve success.

If they do, they will surely be up for a big success. Remember, there are 10 quintillion (10,000,000,000 billion) insects on the planet. How many billions of “insect-smart” devices will we build then? What could we create with that level of intelligent devices?