Striking a Balance on the Edge of Computing

December 31, 2019

News

As we sit on the cusp of the decade, we can see that the next ten years will also be full of disruptive change.

As we sit on the cusp of the decade, we can see that the next ten years will also be full of disruptive change. We have already begun the migration of society into a “smart” lifestyle that leverages the Internet of Things (IoT), the Cloud (and whatever the Fog turns out to be), and the latest generation of hardware into a matrix of functionality that will benefit everyone. The pace of that change will only grow in speed and determination.

The disruptive aspect of the migration involves the (welcome) competition between the plethora of different solutions created to address the various application spaces involved. The tension within these competitive spaces are made more difficult by the need to create regulations and standards for the applications involved. This regulatory activity is needed, but lags behind the development curve of many of the solutions and is driven by the capabilities of the leaders in each space.

Bandwidth Bandwagon

One of the biggest issues in our modern world is the basic one of bandwidth and latency. The management of information, and the ability to route and transmit data quickly and effectively drives large parts of society today. Robust and fast wireless infrastructures are critical to the proper functioning of any intelligent network. Currently there are several solutions jockeying for the largest share of the Cloud and IoT, including 5G, LoRaWAN, and SIGFOX, to name just the most popular.

However, the hardware side is just as critical. Fast and low-latency wireless is not a magic wand. In fact, the growth of Edge Computing embodies the natural migration in computing networks, one that has been going on since the computer was invented. The drive to put as much functionality in the hands of the user is not a new one.

In the old-timey days, in a “Thin Client” system, the mainframe sat in the basement of a building and served a number of passive terminals in a network. The hard-wired system had no real latency issues, and in normal usage, time-sharing the power of the mainframe was sufficient. However, there were applications that needed more, and the creation of the desktop PC ushered in a new generation of computing.

Computing at the Edge

Today, Edge Computing is the latest iteration of this drive. Part of the reason for putting more computing power at the edge of the Cloud is to reduce reliance on wireless latency and bandwidth, but another major part deals with the clash between centralized vs. distributed management philosophies as well. The more power that exists at the point of application, the less reliant the system on centralized control.

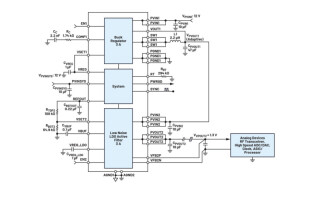

Another important aspect of Edge Computing is security. The smarter your nodes are, the harder it is to break into the network. Having as much intelligence as possible in application devices enables them to be their own gatekeepers, using the latest microcontrollers and FPGAs with embedded secure blocks and other advanced security measures. Software-based firewalls and passwords aren’t enough anymore.

Finding balance

The most important question every business must ask themselves going into the new decade is where they want to strike a balance between wireless, hardware, and software infrastructures in the creation of the solutions they want to offer to the industry. This is a multifaceted question, as it involves considering all aspects of a system, involving everything from hard-wired assets to free-roving automatons.

Where to put each penny, how to integrate hardware and software task responsibilities, what capabilities and support is available from vendors and industry groups, and how to deploy that solution, are all questions of balance everyone involved in creating solutions for the next decade must decide upon and address. Piecemeal and reactive activity is not only detrimental to your bottom line, it also slows the needed migration to a new smart infrastructure.