Flex Logix Inc.

eFPGA: Taking the Cost, Size, and Power Consumption out of FPGA Without Sacrificing Speed or Programmability - Blog

June 02, 2023Over the years, FPGAs have made their way into a wide variety of applications – starting from low-volume applications and migrating their way into high-growth areas such as cloud data centers and communications systems. Programmability is key in many situations where algorithms, standards, and customer needs are rapidly changing. However, this advantage has also come at a cost, because FPGAs are power-hungry and take up valuable space.

Flex Logix Announces Integrated mini-ITX for Edge and Embedded AI Deployment - News

September 27, 2022MOUNTAIN VIEW, Calif. – Flex Logix Technologies announced the InferX Hawk, a hardware and software-ready mini-ITX x86 system enabling the customization, build, and deployment of edge and embedded AI systems.

Flex Logix and CEVA Announce First Flexible DSP ISA - News

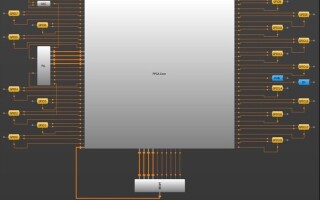

June 27, 2022Flex Logix Technologies and CEVA announced the world’s first successful silicon implementation using Flex Logix’s EFLX embedded FPGA (eFPGA) connected to a CEVA-X2 DSP instruction extension interface.

FLEX LOGIX LEADS eFPGA MARKET WITH MORE THAN 17 LICENSED CUSTOMERS - Press Release

April 08, 2022Adoption of Flex Logix® EFLX embedded FPGA technology is accelerating in production ASICs and SoCs

Flex Logix Announces Production Availability of InferX X1M Boards for Edge AI Vision Systems - News

March 29, 2022Flex Logix Technologies announced production availability of its InferX X1M boards. At roughly the size of a stick of gum, the new InferX X1M boards pack high performance inference capabilities into a low-power M.2 form-factor for space and power constrained applications such as robotic vision, industrial, security, and retail analytics.

Product of the Week: Flex Logix InferX X1M Edge Inference Accelerator - Story

March 29, 2022Every type of edge AI has three hard and fast technical requirements: low power, small form factor, and high performance. Of course, what constitutes “small,” “power efficient,” or “high performance” varies by use case and can describe everything from small microcontrollers to edge servers, but usually you must sacrifice at least one to get the others.

However, one solution that can address everything from edge clouds to endpoints without sacrifice is the FPGA.

Why GPUs are Great for Training, but NOT for Inferencing - Blog

March 23, 2022In the tech industry, you can hardly have a conversation without someone mentioning inference, artificial intelligence (AI), and machine learning (ML). However, it’s important to note that while all these terms are interconnected, they are also vastly different.